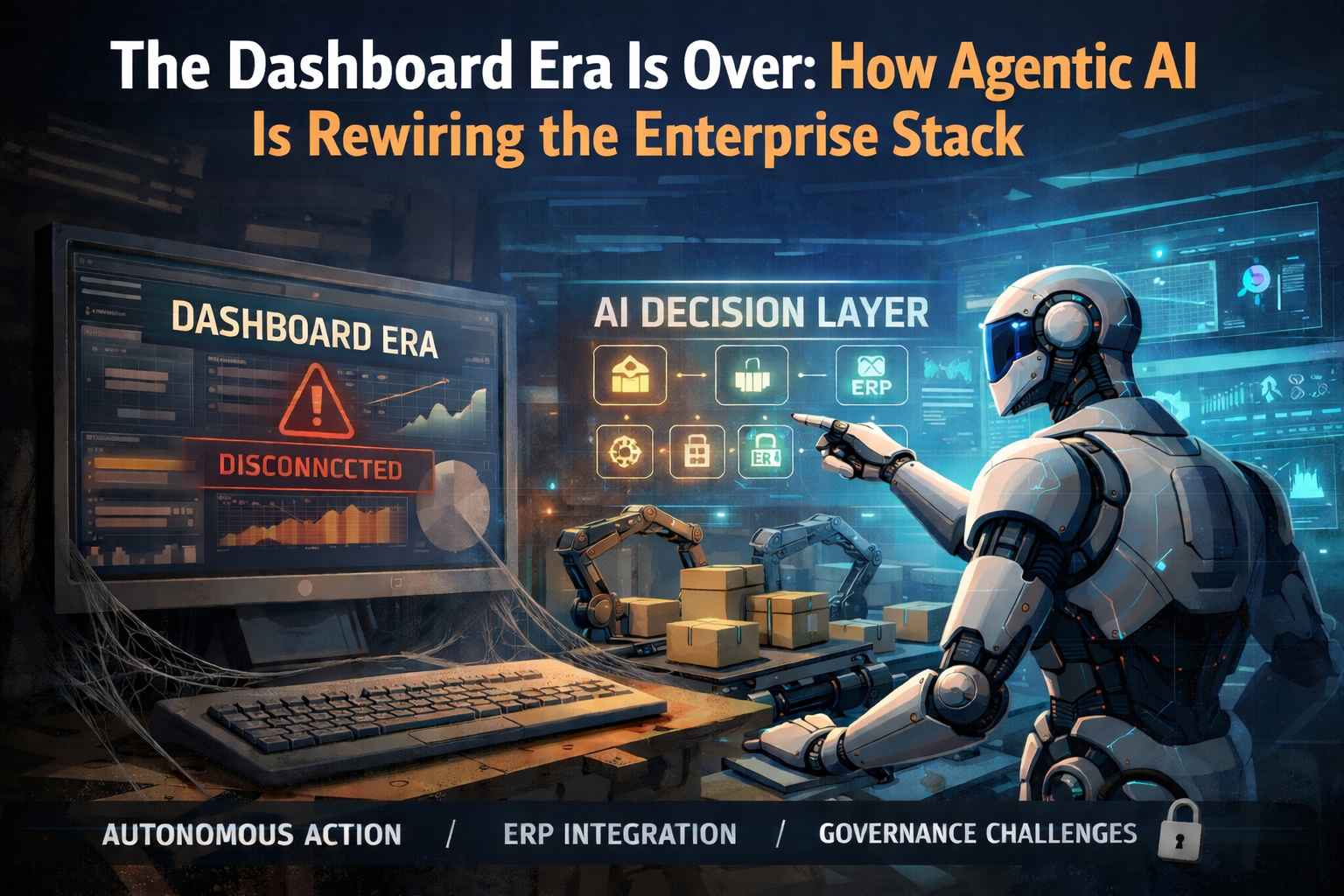

The quarterly business review used to end with a dashboard. Now it ends with a decision already made. This is not a metaphor about organizational culture — it is a description of software architecture, deployed at scale, in production, today. The agentic enterprise has arrived. And it has no patience for passive reporting.

This week crystallized a structural inflection point that those of us working at the intersection of AI, ERP systems, and supply chain operations have been tracking for three years. Several simultaneous developments — spanning model infrastructure, enterprise platform releases, open-source tooling, and regulatory pressure — have converged into a single coherent signal: the era of AI-as-dashboard is over, and the era of AI-as-decision-layer has begun.

So what: The question enterprises must now answer is not "do we have AI?" but "where in our operational stack does AI make decisions — and who governs those decisions when the system gets it wrong?"

The Agentic Turn in Enterprise Platforms

Microsoft's 2026 Release Wave 1, rolling out between April and September, is the most concrete evidence that the platform vendors have chosen their architecture. Agentic AI is being embedded across the entire Dynamics 365 stack — supply chain, finance, HR, warehousing, and ERP — not as a reporting layer, but as an execution layer. In Dynamics 365 Supply Chain Management, AI-powered agents now handle warehouse picking optimization, inventory rebalancing, demand/supply planning with price-demand correlation, and supplier communication — all within the operational workflow, not adjacent to it.

The distinction is critical. Prior generations of enterprise AI recommended actions. The 2026 generation initiates them. An agent can reroute a shipment after a delay, adjust inventory positioning, and notify procurement stakeholders without requiring human escalation for each step. The human-in-the-loop is not removed — it is repositioned to the exception rather than the rule.

Simultaneously, McKinsey published a structural analysis of what they term the "great AI agent and ERP divide" — the gap between organizations deploying AI capabilities and those that have failed to ground those capabilities in the ERP's data ontology. Their finding is unambiguous: AI agents operating without a shared, ERP-grounded data definition layer produce decisions that are locally plausible but operationally misaligned. The ERP is not just a system of record; it is the definitional backbone that makes AI decisions interpretable and actionable across the enterprise.

So what: Interoperability or it doesn't scale. AI agents that cannot read and write to the same ontology as your ERP create a second source of truth — which is, operationally, no source of truth at all.

The Infrastructure Is Ready

Model infrastructure has matured to the point where enterprise deployment is no longer a research problem. Gemini 3.1 Pro now leads 13 of 16 major AI benchmarks and ties GPT-5.4 Pro on the Artificial Analysis Intelligence Index — at approximately one-third the API cost. Meta's Llama 4 Maverick, at 400 billion parameters with a 10-million-token context window, represents the strongest open-weight option available, running on self-hosted infrastructure with no per-token licensing overhead. These are not laboratory results; they are production specifications.

NVIDIA's release of the Multi-Agent Intelligent Warehouse (MAIW) Blueprint as open source is perhaps the most operationally significant development for supply chain practitioners. MAIW provides a production-grade AI command layer that unifies WMS, ERP, IoT sensor data, and document repositories into a single operational intelligence system. It is not a proof of concept — it is a reference architecture. Organizations previously blocked by the complexity of multi-system data integration now have a documented, tested path to deployment.

The aggregate effect is measurable. Manufacturing implementations of AI-enhanced ERP are reporting 30 to 40 percent efficiency gains. AI-powered supply chain forecasting is reducing planning errors by 20 to 50 percent. Gartner projects that 40 percent of enterprise applications will feature embedded AI agents by the end of 2026 — a figure that, given current platform trajectories from Microsoft, SAP, and Oracle, appears conservative.

The Governance Gap That Threatens to Undo It All

Speed without structure produces liability. Only 38 percent of organizations currently have comprehensive AI governance policies in place, while 67 percent of business and IT decision-makers report having felt pressured to approve AI deployments despite unresolved security concerns. One in seven of those decision-makers described those concerns as "extreme" — and approved anyway. This is not a technology problem. It is an accountability architecture problem.

The regulatory environment is hardening in parallel. New state-level AI laws became effective January 1, 2026, with California's SB 53 setting a precedent for transparency and auditability requirements that will shape procurement decisions across sectors. The SEC's 2026 examination priorities have displaced cryptocurrency with AI governance as the dominant risk topic — a signal of how rapidly institutional exposure is being recalibrated.

For enterprise operations specifically, the risks are concrete: model drift in demand forecasting, algorithmic bias in labor scheduling, unauditable inventory decisions made by agents operating outside the ERP's governance framework. These are not hypothetical failure modes — they are the predictable consequences of deploying decision-making systems without commensurate monitoring infrastructure.

So what: KPIs before APIs. Organizations that define what "a good decision" looks like — in measurable, auditable terms — before deploying agents are the ones that will survive the regulatory scrutiny coming in 2026 and beyond.

The LATAM Dimension: Sovereignty or Dependency

Latin America's position in this inflection point is both precarious and strategically clarifying. The region accounts for 6.6 percent of global GDP but attracts just 1.1 percent of worldwide AI investment — a structural gap that compounds with each successive wave of model infrastructure controlled by US and Chinese hyperscalers. The Latin America AI market is valued at approximately USD 29.55 billion and is projected to grow at a 37 percent CAGR through 2034, reaching USD 504 billion. The question is not whether growth will occur — it is whether that growth will be captured locally or extracted externally.

Chile's National Center for Artificial Intelligence (CENIA) has launched Latam-GPT, a regional language model trained on over 8 terabytes of Spanish and Portuguese text from more than 30 institutions across Argentina, Chile, Brazil, Colombia, Mexico, Peru, Ecuador, and Uruguay. With approximately 50 billion parameters, it is not designed to compete with frontier models on raw capability — it is designed to encode regional linguistic, cultural, and historical context that US-centric models systematically misrepresent. Brazil's concurrent $4 billion national AI plan, structured around sovereign cloud infrastructure and a Portuguese-language LLM, reinforces the same strategic logic.

For enterprise AI deployment in Argentina and across LATAM, this creates a genuine fork in the architecture decision: build on sovereign, regionally-grounded foundations that may require more integration work, or adopt hyperscaler platforms that offer faster time-to-deployment but import dependency structures that constrain long-term optionality. Neither choice is obviously correct. Both have governance implications that demand explicit acknowledgment.

So what: From pilot to policy — LATAM enterprises that treat AI as a vendor relationship will find themselves subject to pricing, terms, and data governance decisions made in San Francisco or Beijing. Those that invest in regional capability now are building a different kind of balance sheet.

Socradata Perspective

The convergence of events this week describes, precisely, the market condition Socradata was built to address. ERP vendors are embedding agents. The model infrastructure is production-ready. Governance frameworks are lagging. And enterprises — particularly in LATAM — are navigating a complex layering of platform choices, data ownership questions, and operational accountability gaps.

Socradata's positioning as the intelligence layer on top of enterprise systems — whether Infor WMS, SAP, or Oracle — is not a consulting pitch. It is an architectural response to a documented problem: the gap between the operational data locked in ERP transactions and the decision-making capability that data should enable. We do not build dashboards. We build the systems that decide — with the governance infrastructure to make those decisions auditable, retrainable, and operationally aligned.

The organizations that will lead in 2027 are the ones that, in April 2026, stopped asking "can our ERP generate this report?" and started asking "what decisions should our ERP be making — and are those decisions governed?" That is the conversation Socradata exists to have.

The Operational Imperative

Three actions separate organizations that will navigate this transition effectively from those that will spend the next eighteen months in POC theater.

First, ground AI in ERP ontology before deploying agents. If your AI agents operate on data definitions that are inconsistent with or disconnected from your ERP's master data, every decision they make is built on a foundation that will fracture under audit. The shared ontology is not optional infrastructure — it is the condition of possibility for trustworthy autonomous decision-making.

Second, define your KPIs before selecting your APIs. What constitutes a correct inventory rebalancing decision? What forecast error threshold triggers model retraining? What labor allocation outcome justifies agent autonomy versus human override? These are not questions that emerge naturally from platform deployment — they must be specified in advance, embedded in monitoring infrastructure, and reviewed on a cadence that matches the model's rate of drift.

Third, map your governance exposure now. The regulatory environment of Q4 2026 will be materially more demanding than Q1 2026. Organizations that have auditable records of how their AI agents made decisions — and documented processes for correcting those decisions — will have a structural advantage when compliance requirements crystallize. Those operating on informal deployments will face retrofit costs that dwarf the original implementation.

Is Your Enterprise Ready for the Decision Layer?

Socradata conducts operational AI diagnostics that assess where your ERP and supply chain data can support embedded decision intelligence — and where the governance gaps need to be closed first.

Request an Operational Diagnostic